Face detection and tracking in a video stream

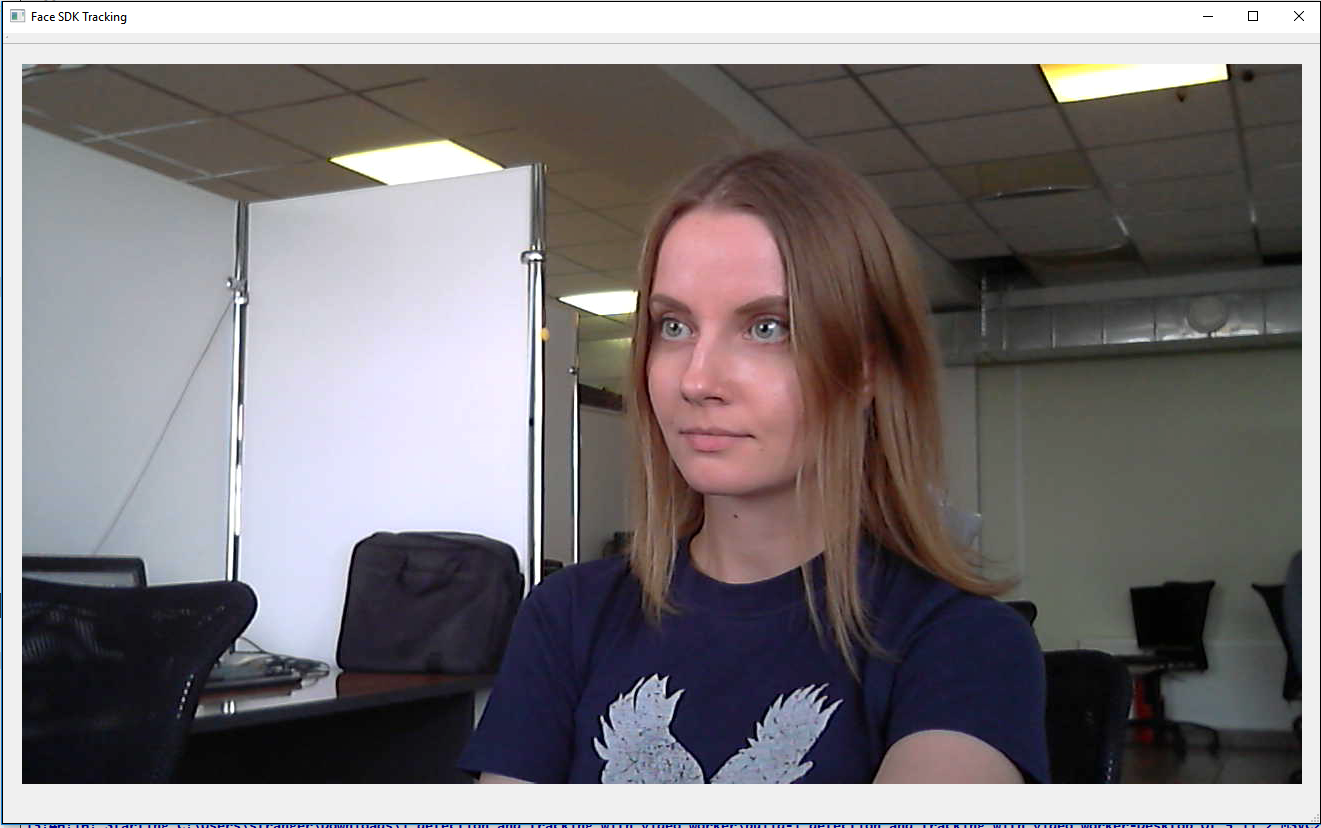

In this tutorial you'll learn how to detect and track faces in a video stream from your camera using the VideoWorker object from Face SDK API. Tracked faces are highlighted with a green rectangle.

Besides Face SDK and Qt, you'll need a camera connected to your PC (for example, a webcam). You can build and run this project either on Windows or Ubuntu (v16.04 or higher).

Find the tutorial project in Face SDK: examples/tutorials/detection_and_tracking_with_video_worker

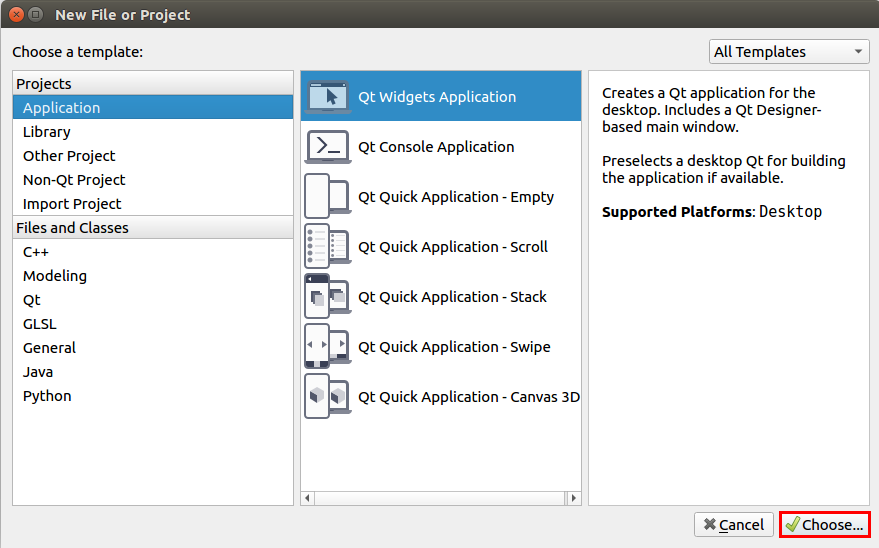

Create a Qt project

- Run Qt and create a new project: File > New File or Project > Application > Qt Widgets Application > Choose...

- Name it, for example, 1_detection_and_tracking_with_video_worker and choose the path. Click Next and choose the necessary platform for your project in the Kit Selection section, for example, Desktop. Click Details and select the Release build configuration ( Debug is not required in this project).

- Leave settings as default in the Class Information window and click Next. Then leave settings as default in the Project Management window and click Finish.

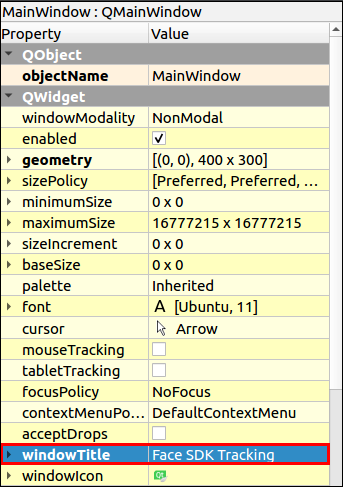

- Title the main window of our application: double-click the file Forms > mainwindow.ui in the project tree. Specify the window name in the Properties tab (the right part of the editor): windowTitle > Face SDK Tracking.

- To lay out widgets in a grid, drag-and-drop the Grid Layout object to the MainWindow widget. Call context menu of MainWindow by right-clicking and select Layout > Lay Out in a Grid. The Grid Layout object will be stretched to the size of the MainWindow widget. Rename the Layout: layoutName > viewLayout.

- To run the project, click Run (Ctrl+R). You'll see an empty window with Face SDK Tracking title.

Display the image from camera

- To use a camera in our project, add Qt multimedia widgets. To do this, add the following line to the .pro file:

detection_and_tracking_with_video_worker.pro

...

QT += multimedia multimediawidgets

...

- To receive the image from a camera, create a new class

QCameraCapture: Add New > C++ > C++ Class > Choose… > Class name – QCameraCapture > Base class – QObject > Next > Project Management (default settings) > Finish. Create a new classCameraSurfaceinqcameracapture.h, which will provide the frames from camera via thepresentcallback.

qcameracapture.h

#include <QCamera>

#include <QAbstractVideoSurface>

#include <QVideoSurfaceFormat>

#include <QVideoFrame>

class CameraSurface : public QAbstractVideoSurface

{

Q_OBJECT

public:

explicit CameraSurface(QObject* parent = 0);

bool present(const QVideoFrame& frame);

QList<QVideoFrame::PixelFormat> supportedPixelFormats(

QAbstractVideoBuffer::HandleType type = QAbstractVideoBuffer::NoHandle) const;

bool start(const QVideoSurfaceFormat& format);

signals:

void frameUpdatedSignal(const QVideoFrame&);

};

- Describe the implementation of this class in

qcameracapture.cppfile. Designate theCameraSurface::CameraSurfaceconstructor and thesupportedPixelFormatsmethod. All the image formats inCameraSurface::supportedPixelFormatslist are supported by Face SDK (RGB24, BGR24, NV12, NV21). With some cameras the image is received in the RGB32 format, so we add this format to the list. This format is not supported by Face SDK, so convert the image from RGB32 to RGB24.

qcameracapture.cpp

#include "qcameracapture.h"

...

CameraSurface::CameraSurface(QObject* parent) :

QAbstractVideoSurface(parent)

{

}

QList<QVideoFrame::PixelFormat> CameraSurface::supportedPixelFormats(

QAbstractVideoBuffer::HandleType handleType) const

{

if (handleType == QAbstractVideoBuffer::NoHandle)

{

return QList<QVideoFrame::PixelFormat>()

<< QVideoFrame::Format_RGB32

<< QVideoFrame::Format_RGB24

<< QVideoFrame::Format_BGR24

<< QVideoFrame::Format_NV12

<< QVideoFrame::Format_NV21;

}

return QList<QVideoFrame::PixelFormat>();

}

- Check the image format in the

CameraSurface::startmethod. If the format is supported, start the camera. Otherwise, handle the exception.

qcameracapture.cpp

...

bool CameraSurface::start(const QVideoSurfaceFormat& format)

{

if (!supportedPixelFormats(format.handleType()).contains(format.pixelFormat()))

{

qDebug() << format.handleType() << " " << format.pixelFormat() << " - format is not supported.";

return false;

}

return QAbstractVideoSurface::start(format);

}

- Process a new frame in the

CameraSurface::presentmethod. If the frame is successfully verified, send the signalframeUpdatedSignalto update the frame. Next, connect this signal to theframeUpdatedSlotslot, where the frame will be processed.

qcameracapture.cpp

...

bool CameraSurface::present(const QVideoFrame& frame)

{

if (!frame.isValid())

{

return false;

}

emit frameUpdatedSignal(frame);

return true;

}

- The

QCameraCaptureconstructor takes the pointer to a parent widget (parent), camera id and image resolution (width and height), which will be stored in the relevant class fields.

cameracapture.h

class QCameraCapture : public QObject

{

Q_OBJECT

public:

explicit QCameraCapture(

QWidget* parent,

const int cam_id,

const int res_width,

const int res_height);

...

private:

QObject* _parent;

int cam_id;

int res_width;

int res_height;

}

- Add

m_cameraandm_surfacecamera objects to theQCameraCaptureclass.

qcameracapture.h

#include <QScopedPointer>

class QCameraCapture : public QObject

{

...

private:

...

QScopedPointer<QCamera> m_camera;

QScopedPointer<CameraSurface> m_surface;

};

- To throw exceptions, include the

stdexceptheader file toqcameracapture.cpp. Save the pointer to a parent widget, camera id and image resolution in the initializer list of theQCameraCapture::QCameraCaptureconstructor. Get the list of available cameras in the constructor body. The list of cameras should contain at least one camera, otherwise, theruntime_errorexception will be thrown. Make sure that the list contains a camera with the requested id. Create a camera and connect the camera signals to the slots processing the object. When the camera status changes, the camera sends thestatusChangedsignal. Create theCameraSurfaceobject to display the frames from the camera. Connect theCameraSurface::frameUpdatedSignalsignal to theQCameraCapture::frameUpdatedSlotslot.

qcameracapture.cpp

#include <QCameraInfo>

#include <stdexcept>

...

QCameraCapture::QCameraCapture(

QWidget* parent,

const int cam_id,

const int res_width,

const int res_height) : QObject(parent),

_parent(parent),

cam_id(cam_id),

res_width(res_width),

res_height(res_height)

{

const QList<QCameraInfo> availableCameras = QCameraInfo::availableCameras();

qDebug() << "Available cameras:";

for (const QCameraInfo &cameraInfo : availableCameras)

{

qDebug() << cameraInfo.deviceName() << " " << cameraInfo.description();

}

if(availableCameras.empty())

{

throw std::runtime_error("List of available cameras is empty");

}

if (!(cam_id >= 0 && cam_id < availableCameras.size()))

{

throw std::runtime_error("Invalid camera index");

}

const QCameraInfo cameraInfo = availableCameras[cam_id];

m_camera.reset(new QCamera(cameraInfo));

connect(m_camera.data(), &QCamera::statusChanged, this, &QCameraCapture::onStatusChanged);

connect(m_camera.data(), QOverload<QCamera::Error>::of(&QCamera::error), this, &QCameraCapture::cameraError);

m_surface.reset(new CameraSurface());

m_camera->setViewfinder(m_surface.data());

connect(m_surface.data(), &CameraSurface::frameUpdatedSignal, this, &QCameraCapture::frameUpdatedSlot);

}

- Stop the camera in the

QCameraCapturedestructor.

qcameracapture.h

class QCameraCapture : public QObject

{

...

explicit QCameraCapture(...);

virtual ~QCameraCapture();

...

}

qcameracapture.cpp

QCameraCapture::~QCameraCapture()

{

if (m_camera)

{

m_camera->stop();

}

}

- Add the

QCameraCapture::frameUpdatedSlotmethod, which processes theCameraSurface::frameUpdatedSignalsignal. Using this method convert theQVideoFrameobject toQImageand send a signal that a new frame is available. Create a pointer to theFramePtrimage. If the image is received in the RGB32 format, convert it to RGB888.

qcameracapture.h

#include <memory>

class QCameraCapture : public QObject

{

Q_OBJECT

public:

typedef std::shared_ptr<QImage> FramePtr;

...

signals:

void newFrameAvailable();

...

public slots:

void frameUpdatedSlot(const QVideoFrame&);

...

}

qcameracapture.cpp

void QCameraCapture::frameUpdatedSlot(

const QVideoFrame& frame)

{

QVideoFrame cloneFrame(frame);

cloneFrame.map(QAbstractVideoBuffer::ReadOnly);

if (cloneFrame.pixelFormat() == QVideoFrame::Format_RGB24 ||

cloneFrame.pixelFormat() == QVideoFrame::Format_RGB32)

{

QImage image((const uchar*)cloneFrame.bits(),

cloneFrame.width(),

cloneFrame.height(),

QVideoFrame::imageFormatFromPixelFormat(cloneFrame.pixelFormat()));

if (image.format() == QImage::Format_RGB32)

{

image = image.convertToFormat(QImage::Format_RGB888);

}

FramePtr frame = FramePtr(new QImage(image));

}

cloneFrame.unmap();

emit newFrameAvailable();

}

- Add the methods to start and stop the camera to

QCameraCapture.

qcameracapture.h

class QCameraCapture : public QObject

{

...

public:

...

void start();

void stop();

...

}

qcameracapture.cpp

void QCameraCapture::start()

{

m_camera->start();

}

void QCameraCapture::stop()

{

m_camera->stop();

}

- In the

QCameraCapture::onStatusChangedmethod process the change of the camera status toLoadedStatus. Check if the camera supports the requested resolution. Set the requested resolution, if it is supported by the camera. Otherwise, set the default resolution (640 x 480), specified by thedefault_res_width,default_res_heightstatic fields.

qcameracapture.h

class QCameraCapture {

...

private slots:

void onStatusChanged();

...

private:

static const int default_res_width;

static const int default_res_height;

...

}

qcameracapture.cpp

const int QCameraCapture::default_res_width = 640;

const int QCameraCapture::default_res_height = 480;

...

void QCameraCapture::onStatusChanged()

{

if (m_camera->status() == QCamera::LoadedStatus)

{

bool found = false;

const QList<QSize> supportedResolutions = m_camera->supportedViewfinderResolutions();

for (const QSize &resolution : supportedResolutions)

{

if (resolution.width() == res_width &&

resolution.height() == res_height)

{

found = true;

}

}

if (!found)

{

qDebug() << "Resolution: " << res_width << "x" << res_width << " unsupported";

qDebug() << "Set default resolution: " << default_res_width << "x" << default_res_height;

res_width = default_res_width;

res_height = default_res_height;

}

QCameraViewfinderSettings viewFinderSettings;

viewFinderSettings.setResolution(

res_width,

res_height);

m_camera->setViewfinderSettings(viewFinderSettings);

}

}

- Display the camera error messages in the

cameraErrormethod if occurred.

qcameracapture.h

class QCameraCapture : public QObject

{

...

private slots:

...

void cameraError();

...

}

qcameracapture.cpp

void QCameraCapture::cameraError()

{

qDebug() << "Camera error: " << m_camera->errorString();

}

- Create a new

Workerclass: Add New > C++ > C++ Class > Choose… > Class name - Worker > Next > Finish. Through theaddFramemethod theWorkerclass will save the last frame from the camera and pass this frame through thegetDataToDrawmethod.

worker.h

#include "qcameracapture.h"

#include <mutex>

class Worker

{

public:

// Data to be drawn

struct DrawingData

{

bool updated;

QCameraCapture::FramePtr frame;

DrawingData() : updated(false)

{

}

};

Worker();

void addFrame(QCameraCapture::FramePtr frame);

void getDataToDraw(DrawingData& data);

private:

DrawingData _drawing_data;

std::mutex _drawing_data_mutex;

};

worker.cpp

void Worker::getDataToDraw(DrawingData &data)

{

const std::lock_guard<std::mutex> guard(_drawing_data_mutex);

data = _drawing_data;

_drawing_data.updated = false;

}

void Worker::addFrame(QCameraCapture::FramePtr frame)

{

const std::lock_guard<std::mutex> guard(_drawing_data_mutex);

_drawing_data.frame = frame;

_drawing_data.updated = true;

}

- Frames will be displayed in the

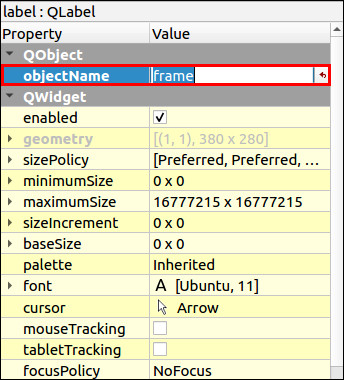

ViewWindowclass. Create a ViewWindow widget: Add > New > Qt > Designer Form Class > Choose... > Template > Widget (default settings) > Next > Name – ViewWindow > Project Management (default settings) > Finish. - In the editor (Design) drag-and-drop the Grid Layout object to the widget. To do this, call ViewWindow context menu by right-clicking and select Layout > Lay Out in a Grid. The Grid Layout object lets you place widgets in a grid and is stretched to the size of the ViewWindow widget. Then add the Label object to gridLayout and name it frame: QObject > objectName > frame.

- Delete the default text in QLabel > text.

- Add the

_qCameracamera to theViewWindowclass and initialize it in the constructor. Using thecamera_image_widthandcamera_image_heightstatic fields, set the required image resolution to 1280x720. The_runningflag stores the camera status:truemeans that the camera runs,falsemeans that the camera is stopped.

viewwindow.h

#include "qcameracapture.h"

#include <QWidget>

namespace Ui {

class ViewWindow;

}

class ViewWindow : public QWidget

{

Q_OBJECT

public:

explicit ViewWindow(QWidget *parent);

~ViewWindow();

private:

Ui::ViewWindow *ui;

static const int camera_image_width;

static const int camera_image_height;

QScopedPointer<QCameraCapture> _qCamera;

bool _running;

};

viewwindow.cpp

const int ViewWindow::camera_image_width = 1280;

const int ViewWindow::camera_image_height = 720;

ViewWindow::ViewWindow(QWidget *parent) :

QWidget(parent),

ui(new Ui::ViewWindow())

{

ui->setupUi(this);

_running = false;

_qCamera.reset(new QCameraCapture(

this,

0, // camera id

camera_image_width,

camera_image_height));

}

- Add the

Workerobject to theViewWindowclass and initialize it in the constructor.

viewwindow.h

#include "worker.h"

class ViewWindow : public QWidget

{

...

private:

...

std::shared_ptr<Worker> _worker;

};

viewwindow.cpp

ViewWindow::ViewWindow(QWidget *parent) :

QWidget(parent),

ui(new Ui::ViewWindow())

{

...

_worker = std::make_shared<Worker>();

...

}

- Frames will be passed to

WorkerfromQCameraCapture. Modify theQCameraCaptureandViewWindowclasses.

qcameracapture.h

...

class Worker;

class QCameraCapture : public QObject

{

...

public:

...

QCameraCapture(

...

std::shared_ptr<Worker> worker,

...);

...

private:

...

std::shared_ptr<Worker> _worker;

...

};

qcameracapture.cpp

#include "worker.h"

...

QCameraCapture::QCameraCapture(

...

std::shared_ptr<Worker> worker,

...) :

_worker(worker),

...

void QCameraCapture::frameUpdatedSlot(

const QVideoFrame& frame)

{

if (cloneFrame.pixelFormat() == QVideoFrame::Format_RGB24 ||

cloneFrame.pixelFormat() == QVideoFrame::Format_RGB32)

{

...

FramePtr frame = FramePtr(new QImage(image));

_worker->addFrame(frame);

}

...

}

viewwindow.cpp

ViewWindow::ViewWindow(QWidget *parent) :

QWidget(parent),

ui(new Ui::ViewWindow())

{

...

_qCamera.reset(new QCameraCapture(

this,

_worker,

...));

}

- The

QCameraCapture::newFrameAvailablesignal is processed in theViewWindow::drawslot, which displays the camera image on the frame widget.

viewwindow.h

class ViewWindow : public QWidget

{

...

private slots:

void draw();

...

}

viewwindow.cpp

ViewWindow::ViewWindow(QWidget *parent) :

QWidget(parent),

ui(new Ui::ViewWindow())

{

...

connect(_qCamera.data(), &QCameraCapture::newFrameAvailable, this, &ViewWindow::draw);

}

void ViewWindow::draw()

{

Worker::DrawingData data;

_worker->getDataToDraw(data);

// If data is the same, image is not redrawn

if(!data.updated)

{

return;

}

// Drawing

const QImage image = data.frame->copy();

ui->frame->setPixmap(QPixmap::fromImage(image));

}

- Start the camera in the

runProcessingmethod and stop it instopProcessing.

viewwindow.h

class ViewWindow : public QWidget

{

public:

...

void runProcessing();

void stopProcessing();

...

}

viewwindow.cpp

void ViewWindow::stopProcessing()

{

if (!_running)

return;

_qCamera->stop();

_running = false;

}

void ViewWindow::runProcessing()

{

if (_running)

return;

_qCamera->start();

_running = true;

}

- Stop the camera in the

~ViewWindowdesctructor.

viewwindow.cpp

ViewWindow::~ViewWindow()

{

stopProcessing();

delete ui;

}

- Connect the camera widget to the main application window: create a view window and start processing in the

MainWindowconstructor. Stop the processing in the~MainWindowdestructor.

mainwindow.h

...

class ViewWindow;

class MainWindow : public QMainWindow

{

...

private:

Ui::MainWindow *ui;

QScopedPointer<ViewWindow> _view;

};

mainwindow.cpp

#include "viewwindow.h"

#include <QMessageBox>

MainWindow::MainWindow(QWidget *parent) :

QMainWindow(parent),

ui(new Ui::MainWindow)

{

ui->setupUi(this);

try

{

_view.reset(new ViewWindow(this));

ui->viewLayout->addWidget(_view.data());

_view->runProcessing();

}

catch(std::exception &ex)

{

QMessageBox::critical(

this,

"Initialization Error",

ex.what());

throw;

}

}

MainWindow::~MainWindow()

{

if (_view)

{

_view->stopProcessing();

}

delete ui;

}

...

- Modify the

mainfunction to catch possible exceptions.

main.cpp

#include <QDebug>

int main(int argc, char *argv[])

{

QApplication a(argc, argv);

try

{

MainWindow w;

w.show();

return a.exec();

}

catch(std::exception& ex)

{

qDebug() << "Exception caught: " << ex.what();

}

catch(...)

{

qDebug() << "Unknown exception caught";

}

return 0;

}

- Run the project. You'll see a window with the image from your camera.

Note: On Windows the image from some cameras can be flipped or mirrored, due to some peculiarities of the image processing by Qt. In this case process the image, for example, using QImage::mirrored().

Detect and track faces in video stream

- Download and extract Face SDK distribution as described in the section Getting Started. The distribution root folder contains the bin and lib folders, depending on your platform.

- To detect and track faces on the image from your camera, integrate Face SDK into your project. In the .pro file, specify the path to Face SDK root folder in the variable

FACE_SDK_PATH, which includes necessary headers. Also, specify the path to theincludefolder (from Face SDK). If the paths are not specified, the exception “Empty path to Face SDK” will be thrown.

detection_and_tracking_with_video_worker.pro

...

# Set path to FaceSDK root directory

FACE_SDK_PATH =

isEmpty(FACE_SDK_PATH) {

error("Empty path to Face SDK")

}

DEFINES += FACE_SDK_PATH=\\\"$$FACE_SDK_PATH\\\"

INCLUDEPATH += $${FACE_SDK_PATH}/include

...

Note: When you specify the path to Face SDK, please use a slash ("/").

- [Linux only] To build the project with Face SDK, add the following option to the .pro file:

detection_and_tracking_with_video_worker.pro

...

unix: LIBS += -ldl

...

- Besides, specify the path to the

facereclibrary and configuration files. Create theFaceSdkParametersclass, which will store the configuration (Add New > C++ > C++ Header File > FaceSdkParameters) and use it inMainWindow.

facesdkparameters.h

#include <string>

// Processing and face sdk settings.

struct FaceSdkParameters

{

std::string face_sdk_path = FACE_SDK_PATH;

};

mainwindow.h

#include <QMainWindow>

#include "facesdkparameters.h"

...

class MainWindow : public QMainWindow

{

private:

...

FaceSdkParameters _face_sdk_parameters;

}

- Integrate Face SDK: add necessary headers to

mainwindow.hand theinitFaceSdkServicemethod to initialize Face SDK services. Create aFacerecServiceobject, which is a component used to create Face SDK modules, by calling theFacerecService::createServicestatic method. Pass the path to the library and path to the folder with the configuration files in atry-catchblock to catch possible exceptions. If the initialization is successful, theinitFaceSdkServicefunction will returntrue. Otherwise, it will returnfalseand you'll see a window with an exception.

mainwindow.h

#include <facerec/libfacerec.h>

class MainWindow : public QMainWindow

{

...

private:

bool initFaceSdkService();

private:

...

pbio::FacerecService::Ptr _service;

...

}

mainwindow.cpp

bool MainWindow::initFaceSdkService()

{

// Integrate Face SDK

QString error;

try

{

#ifdef _WIN32

std::string facerec_lib_path = _face_sdk_parameters.face_sdk_path + "/bin/facerec.dll";

#else

std::string facerec_lib_path = _face_sdk_parameters.face_sdk_path + "/lib/libfacerec.so";

#endif

_service = pbio::FacerecService::createService(

facerec_lib_path,

_face_sdk_parameters.face_sdk_path + "/conf/facerec");

return true;

}

catch(const std::exception &e)

{

error = tr("Can't init Face SDK service: '") + e.what() + "'.";

}

catch(...)

{

error = tr("Can't init Face SDK service: ... exception.");

}

QMessageBox::critical(

this,

tr("Face SDK error"),

error + "\n" +

tr("Try to change face sdk parameters."));

return false;

}

- Add a service initialization call in the

MainWindow::MainWindowconstructor. In case of an error, throw thestd::runtime_errorexception.

mainwindow.cpp

MainWindow::MainWindow(QWidget *parent) :

QMainWindow(parent),

ui(new Ui::MainWindow)

{

...

if (!initFaceSdkService())

{

throw std::runtime_error("Face SDK initialization error");

}

...

}

- Pass

FacerecServiceand Face SDK parameters to theViewWindowconstructor, where they will be used to create theVideoWorkertracking module. Save the service and parameters to the class fields.

mainwindow.cpp

MainWindow::MainWindow(QWidget *parent) :

QMainWindow(parent),

ui(new Ui::MainWindow)

{

...

_view.reset(new ViewWindow(

this,

_service,

_face_sdk_parameters));

...

}

viewwindow.h

#include "facesdkparameters.h"

#include <facerec/libfacerec.h>

...

class ViewWindow : public QWidget

{

Q_OBJECT

public:

ViewWindow(

QWidget *parent,

pbio::FacerecService::Ptr service,

FaceSdkParameters face_sdk_parameters);

...

private:

...

pbio::FacerecService::Ptr _service;

FaceSdkParameters _face_sdk_parameters;

};

viewwindow.cpp

...

ViewWindow::ViewWindow(

QWidget *parent,

pbio::FacerecService::Ptr service,

FaceSdkParameters face_sdk_parameters) :

QWidget(parent),

ui(new Ui::ViewWindow()),

_service(service),

_face_sdk_parameters(face_sdk_parameters)

...

- Modify the

Workerclass for interaction with Face SDK. TheWorkerclass takes theFacerecServicepointer and name of the configuration file of the tracking module. TheWorkerclass creates theVideoWorkercomponent from Face SDK, responsible for face tracking, passes the frames to it and processes the callbacks, which contain the tracking results. Imlement the constructor – create theVideoWorkerobject, specifying the configuration file, recognizer method (in this case it is empty, as faces are not to be recognized in this project), number of video streams (in this case it is 1, as we use one camera only).

worker.h

#include <facerec/libfacerec.h>

class Worker

{

...

public:

...

Worker(

const pbio::FacerecService::Ptr service,

const std::string videoworker_config);

private:

...

pbio::VideoWorker::Ptr _video_worker;

};

worker.cpp

#include "worker.h"

#include "videoframe.h"

Worker::Worker(

const pbio::FacerecService::Ptr service,

const std::string videoworker_config)

{

pbio::FacerecService::Config vwconfig(videoworker_config);

_video_worker = service->createVideoWorker(

vwconfig,

"", // Recognition isn't used

1, // streams_count

0, // processing_threads_count

0); // matching_threads_count

}

Note: In addition to the face detection and tracking, VideoWorker can be used for face recognition on several video streams. In this case specify the recognizer method and the processing_threads_count and matching_threads_count streams.

- Subscribe to the callbacks from the

VideoWorkerclass –TrackingCallback(a face is detected and tracked),TrackingLostCallback(a face is lost). Delete them in the destructor.

worker.h

class Worker

{

public:

...

Worker(...);

~Worker();

...

private:

...

static void TrackingCallback(

const pbio::VideoWorker::TrackingCallbackData &data,

void* const userdata);

static void TrackingLostCallback(

const pbio::VideoWorker::TrackingLostCallbackData &data,

void* const userdata);

int _tracking_callback_id;

int _tracking_lost_callback_id;

};

worker.cpp

Worker::Worker(...)

{

...

_tracking_callback_id =

_video_worker->addTrackingCallbackU(

TrackingCallback,

this);

_tracking_lost_callback_id =

_video_worker->addTrackingLostCallbackU(

TrackingLostCallback,

this);

}

Worker::~Worker()

{

_video_worker->removeTrackingCallback(_tracking_callback_id);

_video_worker->removeTrackingLostCallback(_tracking_lost_callback_id);

}

- Include the

cassertheader to handle exceptions. The result inTrackingCallbackis received in the form of theTrackingCallbackDatastructure, which stores data about all faces being tracked. The preview output is synchronized with the result output. We cannot immediately display the frame, passed toVideoWorker, as it will be processed a bit later. Therefore, frames are stored in a queue. When the result is obtained, find a frame that matches this result. Some frames may be skipped byVideoWorkerunder heavy load, which means that sometimes there is no matching result for some frames. In the algorithm below, the image corresponding to the last received frame is extracted from the queue. Save the detected faces for each frame to be able to use them later for visualization. To synchronize the shared data changes inTrackingCallbackandTrackingLostCallback, usestd::mutex.

worker.h

#include <cassert>

...

class Worker

{

...

public:

// Face data

struct FaceData

{

pbio::RawSample::Ptr sample;

bool lost;

int frame_id;

FaceData() : lost(true)

{

}

};

// Drawing data

struct DrawingData

{

...

int frame_id;

// map<track_id, face>

std::map<int, FaceData> faces;

...

};

...

};

worker.cpp

...

// static

void Worker::TrackingCallback(

const pbio::VideoWorker::TrackingCallbackData &data,

void * const userdata)

{

// Checking arguments

assert(userdata);

// frame_id - frame used for drawing, samples - info about faces to be drawn

const int frame_id = data.frame_id;

const std::vector<pbio::RawSample::Ptr> &samples = data.samples;

// User information - pointer to Worker

// Pass the pointer

Worker &worker = *reinterpret_cast<Worker*>(userdata);

// Get the frame with frame_id

QCameraCapture::FramePtr frame;

{

const std::lock_guard<std::mutex> guard(worker._frames_mutex);

auto& frames = worker._frames;

// Searching in worker._frames

for(;;)

{

// Frames should already be received

assert(frames.size() > 0);

// Check that frame_id are received in ascending order

assert(frames.front().first <= frame_id);

if(frames.front().first == frame_id)

{

// Frame is found

frame = frames.front().second;

frames.pop();

break;

}

else

{

// Frame was skipped (i.e. the worker._frames.front())

std::cout << "skipped " << ":" << frames.front().first << std::endl;

frames.pop();

}

}

}

// Update the information

{

const std::lock_guard<std::mutex> guard(worker._drawing_data_mutex);

// Frame

worker._drawing_data.frame = frame;

worker._drawing_data.frame_id = frame_id;

worker._drawing_data.updated = true;

// Samples

for(size_t i = 0; i < samples.size(); ++i)

{

FaceData &face = worker._drawing_data.faces[samples[i]->getID()];

face.frame_id = samples[i]->getFrameID(); // May differ from frame_id

face.lost = false;

face.sample = samples[i];

}

}

}

- Implement

TrackingLostCallback, where we mark that the tracked face left the frame.

worker.cpp

...

// static

void Worker::TrackingLostCallback(

const pbio::VideoWorker::TrackingLostCallbackData &data,

void* const userdata)

{

const int track_id = data.track_id;

// User information - pointer to Worker

// Pass the pointer

Worker &worker = *reinterpret_cast<Worker*>(userdata);

{

const std::lock_guard<std::mutex> guard(worker._drawing_data_mutex);

FaceData &face = worker._drawing_data.faces[track_id];

assert(!face.lost);

face.lost = true;

}

}

VideoWorkerreceives the frames via thepbio::IRawImageinterface. Create theVideoFrameheader file: Add New > C++ > C++ Header File > VideoFrame. Include it into thevideoframe.hfile and implement thepbio::IRawImageinterface for theQImageclass. Thepbio::IRawImageinterface allows to get the pointer to image data, its format, width and height.

videoframe.h

#include "qcameracapture.h"

#include <pbio/IRawImage.h>

class VideoFrame : public pbio::IRawImage

{

public:

VideoFrame(){}

virtual ~VideoFrame(){}

virtual const unsigned char* data() const throw();

virtual int32_t width() const throw();

virtual int32_t height() const throw();

virtual int32_t format() const throw();

QCameraCapture::FramePtr& frame();

const QCameraCapture::FramePtr& frame() const;

private:

QCameraCapture::FramePtr _frame;

};

inline

const unsigned char* VideoFrame::data() const throw()

{

if(_frame->isNull() || _frame->size().isEmpty())

{

return NULL;

}

return _frame->bits();

}

inline

int32_t VideoFrame::width() const throw()

{

return _frame->width();

}

inline

int32_t VideoFrame::height() const throw()

{

return _frame->height();

}

inline

int32_t VideoFrame::format() const throw()

{

if(_frame->format() == QImage::Format_Grayscale8)

{

return (int32_t) FORMAT_GRAY;

}

if(_frame->format() == QImage::Format_RGB888)

{

return (int32_t) FORMAT_RGB;

}

return -1;

}

inline

QCameraCapture::FramePtr& VideoFrame::frame()

{

return _frame;

}

inline

const QCameraCapture::FramePtr& VideoFrame::frame() const

{

return _frame;

}

- In the

addFramemethod, pass the frames toVideoWorker. Any exceptions occurred during the callback processing are thrown again in thecheckExceptionsmethod. Create the_framesqueue to store the frames. This queue will contain the frame id and the corresponding image, so that we can find the frame, which matches the processing result inTrackingCallback. To synchronize the changes in shared data, usestd::mutex.

worker.h

#include <queue>

...

class Worker : public QObject

{

...

private:

...

std::queue<std::pair<int, QCameraCapture::FramePtr> > _frames;

std::mutex _frames_mutex;

...

};

worker.cpp

#include "videoframe.h"

...

void Worker::addFrame(

QCameraCapture::FramePtr frame)

{

VideoFrame video_frame;

video_frame.frame() = frame;

const std::lock_guard<std::mutex> guard(_frames_mutex);

const int stream_id = 0;

const int frame_id = _video_worker->addVideoFrame(

video_frame,

stream_id);

_video_worker->checkExceptions();

_frames.push(std::make_pair(frame_id, video_frame.frame()));

}

- Modify the

getDataToDrawmethod - do not draw the faces, whichTrackingLostCallbackwas called for.

worker.cpp

void Worker::getDataToDraw(DrawingData &data)

{

...

// Delete the samples, for which TrackingLostCallback was called

{

for(auto it = _drawing_data.faces.begin();

it != _drawing_data.faces.end();)

{

const std::map<int, FaceData>::iterator i = it;

++it; // i can be deleted, so increment it at this stage

FaceData &face = i->second;

// Keep the faces, which are being tracked

if(!face.lost)

continue;

_drawing_data.updated = true;

// Delete faces

_drawing_data.faces.erase(i);

}

}

...

}

- Modify the

QCameraCaptureclass to catch the exceptions, which can be thrown inWorker::addFrame.

qcameracapture.cpp

#include <QMessageBox>

...

void QCameraCapture::frameUpdatedSlot(

const QVideoFrame& frame)

{

...

if (cloneFrame.pixelFormat() == QVideoFrame::Format_RGB24 ||

cloneFrame.pixelFormat() == QVideoFrame::Format_RGB32)

{

QImage image(...);

if (image.format() == QImage::Format_RGB32)

{

image = image.convertToFormat(QImage::Format_RGB888);

}

try

{

FramePtr frame = FramePtr(new QImage(image));

_worker->addFrame(frame);

}

catch(std::exception& ex)

{

stop();

cloneFrame.unmap();

QMessageBox::critical(

_parent,

tr("Face SDK error"),

ex.what());

return;

}

}

...

}

- Create the

DrawFunctionclass, which will contain a method to draw the tracking results in the image: Add New > C++ > C++ Class > Choose… > Class name – DrawFunction.

drawfunction.h

#include "worker.h"

class DrawFunction

{

public:

DrawFunction();

static QImage Draw(

const Worker::DrawingData &data);

};

drawfunction.cpp

#include "drawfunction.h"

#include <QPainter>

DrawFunction::DrawFunction()

{

}

// static

QImage DrawFunction::Draw(

const Worker::DrawingData &data)

{

QImage result = data.frame->copy();

QPainter painter(&result);

// Clone the information about faces

std::vector<std::pair<int, Worker::FaceData> > faces_data(data.faces.begin(), data.faces.end());

// Draw faces

for(const auto &face_data : faces_data)

{

const Worker::FaceData &face = face_data.second;

painter.save();

// Visualize faces in the frame

if(face.frame_id == data.frame_id && !face.lost)

{

const pbio::RawSample& sample = *face.sample;

QPen pen;

// Draw the bounding box

{

// Get the bounding box

const pbio::RawSample::Rectangle bounding_box = sample.getRectangle();

pen.setWidth(3);

pen.setColor(Qt::green);

painter.setPen(pen);

painter.drawRect(bounding_box.x, bounding_box.y, bounding_box.width, bounding_box.height);

}

}

painter.restore();

}

painter.end();

return result;

}

- In the

ViewWindowconstructor, pass theFacerecServicepointer and the name of the configuration file of the tracking module when creatingWorker. In theDrawmethod, draw the tracking result in the image by callingDrawFunction::Draw.

viewwindow.cpp

#include "drawfunction.h"

...

ViewWindow::ViewWindow(...) :

...

{

...

_worker = std::make_shared<Worker>(

_service,

"video_worker_lbf.xml");

...

}

...

void ViewWindow::draw()

{

...

// Drawing

const QImage image = DrawFunction::Draw(data);

...

}

- Run the project. Now you can see that faces in the image are detected and tracked (they are highlighted with a green rectangle). You can find more information about using the

VideoWorkerobject in Video Stream Processing section.